Asana AI Visibility Benchmark 2026: GEO Data Across ChatGPT, Perplexity and Google AI

Covering how brands show up in LLM-driven experiences, with practical research and real-world examples.

Popular articles

XLR8 AI ran 45 structured queries to measure how often Asana appears when buyers ask AI assistants about work management, project management and team collaboration software. The headline: strong but not dominant — and one model that could be costing Asana serious pipeline.

Key Results

- 84.4% Overall visibility - 38 of 45 queries

- #3.6 Avg position when mentioned

- 39 Total mentions across all models

- 60% Perplexity rate vs 100% on Google AI

When a VP of Operations asks ChatGPT "what's the best work management software for my team," does Asana show up? And when they ask Perplexity? And Google AI Mode? The answers are not the same — and the gap between them has pipeline consequences that traditional marketing analytics will never surface.

XLR8 AI, a GEO (Generative Engine Optimization) tracking platform, ran a structured 45-query experiment across three major AI models on April 15, 2026 to benchmark exactly where Asana stands in AI-driven software discovery. This article breaks down every number.

About this benchmark

This data was produced by XLR8 AI, a GEO tracking and optimization platform. XLR8 AI ran 45 intent-aligned discovery queries across Google AI Mode, GPT Fast (ChatGPT), and Perplexity on April 15, 2026, logging brand mentions, average rank position, and competitor co-occurrences. marketingforllms.com publishes this data as an editorial case study for B2B SaaS marketers learning about GEO.

Methodology

How XLR8 AI Ran the Asana GEO Experiment

Asana GEO experiment used 45 queries across three categories that map directly to Asana's core positioning: Work Management Software, Team Collaboration Platform, and Project Management Platform. Each category received 15 queries per model, and XLR8 AI's platform logged three metrics per run: whether Asana was mentioned (yes/no), its rank position when it appeared, and which competitor brands appeared in the same answer.

The three models tested — Google AI Mode, GPT Fast (ChatGPT), and Perplexity — represent the primary AI interfaces that B2B software buyers use for vendor research in 2026. Running the same query set across all three exposes model-specific gaps that single-model testing would miss entirely.

Headline results

Asana's 2026 AI Visibility Snapshot

84.4%

Overall AI visibility across 45 queries. Asana appeared in 38 of 45 discovery queries. Average position of #3.6 means it is near — but not at — the top of recommendation lists. It is consistently part of the AI conversation for work management, but not yet the default first answer.

84.4% overall is a strong baseline for any SaaS brand — most tools in this experiment's competitive set would be happy to achieve it. But the combination of a #3.6 average position and a 40-point Perplexity gap reveals two distinct and actionable problems. Being mentioned at position #3–4 means Asana is rarely the brand that shapes the buyer's first impression. And missing from 40% of Perplexity answers means a significant slice of enterprise-intent research is happening without Asana in the room.

Model performance

ChatGPT, Perplexity and Google AI: Where Asana Wins and Where It Gaps

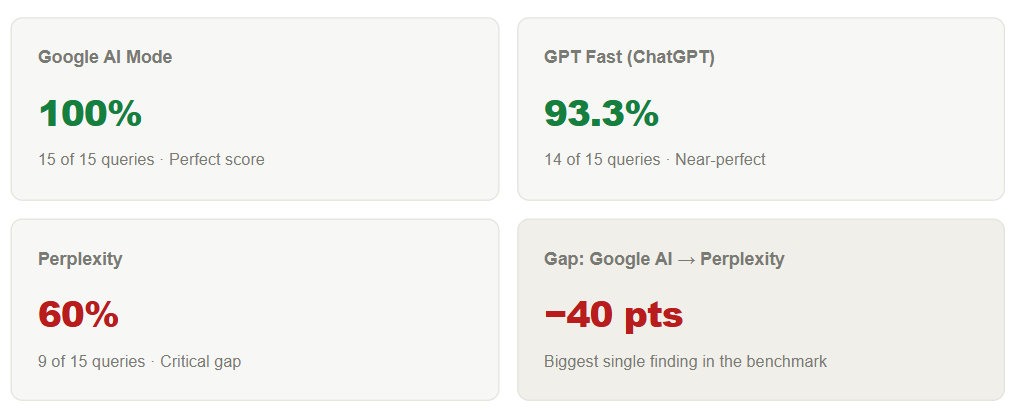

Biggest single finding in the benchmark

Google AI Mode: Perfect 100% — Asana's strongest channel

In Google AI Mode, Asana appeared in every single one of the 15 queries tested. This reflects Asana's strong web authority, structured content, and Google-indexed footprint. When Google's AI layer assembles work management and project management answers, Asana is always in the room. For GEO strategy, Google AI Mode is where Asana should focus on improving rank order (moving from #3–4 to #1–2) rather than inclusion.

ChatGPT: 93.3% — Reliable but not dominant

GPT Fast mentioned Asana in 14 of 15 queries — a single miss in a strong overall performance. ChatGPT reliably maps Asana to work management, collaboration and project management contexts. The priority here is improving Asana's narrative depth in ChatGPT's training data so it moves higher in recommendation order, not just achieving inclusion.

Perplexity: 60% — The 40-point gap that matters most

Critical: Perplexity only recommends Asana in 60% of queries

Asana appeared in just 9 of 15 Perplexity queries — a 40-point gap versus Google AI Mode. XLR8 AI's data shows that the missed queries are concentrated in enterprise-intent prompts, where Perplexity instead surfaces Celoxis, Wrike, and monday.com. Perplexity's retrieval stack weights real-time, citation-rich, authoritative third-party sources differently from ChatGPT and Google. Asana's current content footprint is not yet aligned with how Perplexity assembles enterprise work management answers.

Category breakdown

Work Management vs Collaboration vs Project Management: Asana's Category Map

Asana visibility rate by query category

.png)

The best work management software queries return Asana 93% of the time — its natural home and clearest LLM association. The 80% rates in both Team Collaboration and Project Management reflect categories where tools like Microsoft Teams, Jira, and Slack carry strong independent authority. The gap between Asana's strongest category (93%) and its weaker ones (80%) is a content and positioning alignment problem, not a brand awareness problem — LLMs know who Asana is, they just don't always route collaboration and project management queries to it first.

Competitive landscape

Who Appears Alongside Asana in AI Answers: The Competitive Co-Occurrence Map

XLR8 AI's experiment logged not just Asana's appearances but which competitors appeared in the same answers. This co-occurrence data is how GEO practitioners measure AI share of voice — and where Asana's closest competitive threats become visible.

Total brand mentions across all 45 queries and 3 models

.png)

Wrike: the co-leader LLMs treat as Asana's twin

Wrike's 36 mentions — just 3 behind Asana's 39 — confirm what XLR8 AI sees across multiple experiments: Wrike and Asana appear together in most AI-generated work management answers, often in adjacent list positions. For Asana's GEO program, this co-leadership is the primary competitive signal. The question is not whether Asana appears, but whether it appears before Wrike, and how LLMs frame each tool's relative strengths.

Enterprise queries on Perplexity: a different competitive set

Perplexity enterprise queries surface a different competitive set

When enterprise-flavored queries were run on Perplexity ("best project portfolio management for large teams," "enterprise work management with advanced analytics"), Celoxis, Wrike, and monday.com appeared in positions where Asana was absent. XLR8 AI interprets this as a signal that Perplexity's source set for enterprise intent includes vendors with stronger enterprise-specific narratives — security documentation, compliance certifications, advanced analytics case studies — in the exact formats Perplexity weights.

GEO strategy

What Asana Should Do: 4 GEO Actions Based on This Data

Every number in this benchmark maps to a specific content or distribution action. Here is how XLR8 AI translates this data into a GEO roadmap for a brand in Asana's position.

1. Close the Perplexity gap with enterprise-grade citation-friendly content

Perplexity weights real-time, structured, third-party-cited content. Asana needs to publish long-form enterprise content — security, compliance, portfolio management, advanced analytics — in formats Perplexity retrieves: clear headings, structured FAQs, explicit citations to authoritative sources. The target: moving Perplexity from 60% to 80%+.

2. Align content taxonomy with exact LLM category labels

LLMs use "work management software," "team collaboration platform," and "project management platform" as discrete categories. Asana's product pages, comparison content, and documentation should use these exact labels — not just Asana's own brand language. Explicit category naming is how models reliably route queries to the right brand.

3. Differentiate from Wrike in AI-parseable formats

With Wrike just 3 mentions behind, Asana needs LLMs to understand the specific contexts where Asana is the better choice. Publish structured comparison content (Asana vs Wrike for marketing teams, Asana vs Wrike for product operations) with concrete differentiators that models can extract and repeat in recommendation answers.

4. Optimize for rank order in Google AI Mode — not just presence

Perfect Google AI Mode visibility means the only remaining lever is rank. Improving from #3–4 to #1–2 in Google AI answers requires deepening topical authority — more comprehensive, more cited, more structured content around Asana's core categories — combined with FAQ schema markup that Google AI Mode preferentially surfaces.

Key takeaways from the Asana benchmark

- 84.4% overall — strong baseline but not category dominance

- Google AI Mode 100%, ChatGPT 93.3%, Perplexity 60% — the model gap is the story

- Average position #3.6 means Asana is rarely the first recommendation in any model

- Wrike is the primary co-leader at 36 mentions — LLMs treat them as near-interchangeable

- Enterprise queries on Perplexity favor Celoxis, Wrike and monday.com over Asana

- Work Management is the strongest category (93%) — Collaboration and PM need reinforcement

FAQ

Frequently Asked Questions

What does AI visibility mean for a brand like Asana?

AI visibility measures how often and how prominently Asana appears when buyers use ChatGPT, Perplexity, or Google AI to research work management software. In 2026, LLM recommendations increasingly replace traditional search for B2B SaaS evaluation. XLR8 AI's benchmark gives Asana a quantified baseline — 84.4% overall — against which to measure the impact of GEO content initiatives.

Why does Asana perform better on Google AI Mode than Perplexity?

Google AI Mode draws heavily on Google's own web index, where Asana's domain authority and structured content are strong signals. Perplexity uses a different retrieval pipeline that weights real-time, citation-rich third-party content more heavily. Asana's enterprise-specific content and external citations are not yet aligned with Perplexity's source preferences, particularly for CFO and enterprise-intent queries.

What is GEO and how does it differ from SEO for a brand like Asana?

GEO (Generative Engine Optimization) is the practice of optimizing a brand's presence in AI-generated answers, rather than search rankings. For Asana, SEO improves visibility in Google's blue-link results; GEO improves visibility in ChatGPT's recommendation lists, Perplexity's cited answers, and Google AI Mode's summaries. XLR8 AI's platform tracks GEO performance with the same rigor that SEO tools track keyword rankings.

How was this benchmark run?

XLR8 AI designed and executed this benchmark on April 15, 2026. 45 intent-aligned queries were run across three categories — Work Management Software, Team Collaboration Platform, and Project Management Platform — with 15 queries per model across Google AI Mode, GPT Fast (ChatGPT), and Perplexity. Brand mentions, rank positions, and competitor co-occurrences were logged by XLR8 AI's automated platform. All numbers in this article were verified against raw database records before publication.